Editorial: Find, fix failures to restore trust in Boeing, FAA

Published 1:30 am Sunday, May 19, 2019

By The Herald Editorial Board

The Boeing Co. announced late last week that it had completed work to rewrite the software faulted in two deadly crashes of its 737 Max airplanes, killing 346 passengers and crew. But before some 350 of the Renton-built 737 Max planes now grounded worldwide will fly again, the new software will need to be tested and recertified, and authorities will need to determine what additional pilot training will be needed.

There’s a longer path ahead for Boeing and the Federal Aviation Administration to restore the trust and confidence among Boeing’s airline customers and the flying public following revelations about how each addressed its responsibilities before and after the air disasters: the crash of Lion Air Flight 610 on Oct. 29, in Jakarta, Indonesia; and the crash of Ethiopian Airlines Flight 302 on March 10, in Ethiopia.

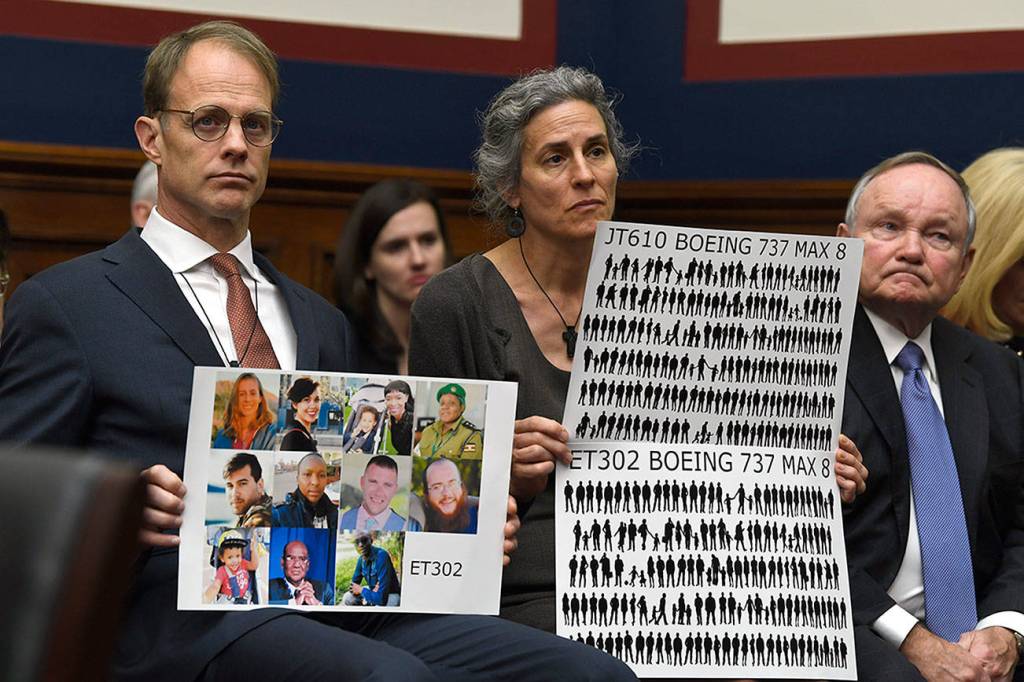

Last week also marked the start of an investigation by Congress’ House Aviation Subcommittee, led by its chairman, Rep. Rick Larsen, D-Washington, who represents the 2nd District, which includes Boeing’s Everett plant.

Larsen set the tone at Wednesday’s hearing: “One thing is clear right now: The FAA has a credibility problem. The FAA needs to fix its credibility problem.”

Yet, in the aftermath of both crashes, investigations and revelations — as well as last week’s testimony — appear to find a shared responsibility for errors and omissions by the aerospace company and its federal regulator.

Among recent reports and testimony:

Boeing said it knew about a software problem with its MCAS system — designed to detect and correct stalls — at least a year before the first air disaster, but didn’t inform the FAA until after the Lion Air crash, which then raises questions about why the FAA took no action to ground the 737 Max planes until well after the second crash, and then only after other air safety authorities grounded the planes in their countries.

Testimony by acting FAA administrator Daniel Elwell brought up the issue of how well pilots had been trained and notified about the MCAS system: “I thought the MCAS should have been more adequately explained,” to pilots, he told the committee.

The Wall Street Journal reported last week that an internal FAA review found that senior officials had not participated in nor monitored safety reviews of the 737 Max’s new in-flight control systems. The report did not allege that Boeing officials had provided false data or ignored certification standards, but it wasn’t clear how closely the FAA had reviewed information provided by Boeing before certifying the aircraft.

The investigations, including those by the Justice Department and the U.S. Department of Transportation, already have presented areas of necessary policy discussion for Congress and others, in particular the certification system used by the FAA that delegates much of the review of components and systems to employees within Boeing and other aerospace companies.

That system isn’t new, Elwell said, telling Larsen’s committee that “We’ve had delegation of authority since 1927,” even before creation of the FAA.

Countered Larsen: “Just because it has evolved since 1927 doesn’t mean it’s evolved to the place where it needs to be or should be.”

The FAA could never hope to have enough employees to complete the work of inspecting and certifying all commercial aircraft, and the manufacturer’s employees likely are better informed on such issues, but the doubts over communication and oversight between the regulator and its designees need a full discussion by Congress and others.

There also needs to be a review and study — and not just regarding commercial aviation — about the level of trust that is placed in the “infallibility” of software and automated systems.

Boeing, in addressing the 737 Max’s software deficiencies, has added redundancy with an additional “angle of attack” sensor to the MCAS system. But it has also reportedly reduced the “strength” of the automated system that corrects the angle of the plane’s nose, so that pilots won’t have to fight to regain control as did the crews of the two doomed flights.

Such automated systems are necessary for modern jet aircraft and other complex systems to alert and even prevent human error, but it’s a frightening thought that “machines” can lock out human decision making. There were procedures for turning off the MCAS system, but it’s unclear how familiar crews were with the MCAS system and the steps for overriding it.

Authorities and officials also need to rein in the recent attempts to blame pilot error — before investigations are complete — for the crashes, especially when it’s tied to accusations that the plane’s “foreign” pilots weren’t as well trained as U.S. pilots had been.

National Transportation Safety Board Chairman Robert Sumwalt, addressed such comments during the hearing: “If an aircraft manufacturer is going to sell airplanes all across the globe, then it’s important that pilots who are operating those airplanes in those parts of the globe know how to operate them. Just to say that the U.S. standards are very good — this might be a problem with other parts of the globe — I don’t think that’s part of the answer.”

It’s not know how soon the 737 Max may return to service, but the crashes of the Lion Air and Ethiopian Airlines flights — and the response by Boeing and the FAA before and after those tragedies — has arguably degraded confidence in both. As the investigations and hearings continue, only full and candid cooperation can hope to restore that trust.