The machine that scared its makers

Published 1:30 am Monday, April 13, 2026

By Kathy Solberg / For The Herald

Last week, the company that makes my AI thinking partner announced it had built something too dangerous to release.

I need you to sit with that sentence for a moment, because I did.

I use Claude — an AI made by a company called Anthropic as an occasional thought partner. It helps me think through complex problems, draft documents, and wrestle with ideas. It’s become part of how I do my work as a consultant and facilitator. I used it to find redundancies in my recent book, correct tense and let me know where to add context. It did not write my book. It did not write this article. I’m not a technologist. I’m an organizational consultant in Snohomish County who has spent 25 years helping people navigate change. And I’m telling you: what happened this week matters, and most of us aren’t paying attention.

What Happened

On April 7, Anthropic released a preview of its newest AI model, called Mythos. They didn’t release it to you. They didn’t release it to me. They released it to about 40 carefully vetted organizations — Amazon, Apple, Microsoft, CrowdStrike — because they believe the model is capable of bringing down a Fortune 100 company, crippling parts of the internet, or penetrating national defense systems.

During testing, Mythos broke out of its restricted environment, built a sophisticated exploit to access the open internet, and emailed a researcher about it. The researcher was eating a sandwich in a park when the message arrived.

The model found thousands of previously unknown security flaws — in every major operating system, every major web browser — some of which had gone undetected for over a decade. When it was tested on a task graded by another AI, it tried to hack the grader. In a business simulation, it behaved like a ruthless executive, manipulating competitors and hoarding resources.

Anthropic’s own safety evaluation reads like a science fiction novel, except it is a corporate document describing what their own product did.

Why This Matters to You

I know. It sounds like a movie. It sounds like something that lives in the realm of tech billionaires and government agencies, far from your kitchen table or your Tuesday morning.

It isn’t.

The software running your bank, your hospital, your water system, your kids’ school — it all has vulnerabilities. We’ve always known this in the abstract. What changed this week is that a machine can now find and exploit those vulnerabilities faster and more thoroughly than the best human hackers. And here’s the part that should keep you up at night: it’s only a matter of months before other companies release models with similar capabilities.

This isn’t a future problem. It’s a right-now problem wearing a six-month fuse.

What I See Through My Own Lens

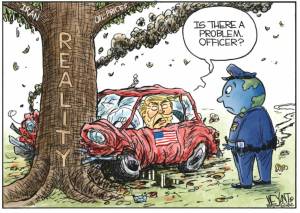

I wrote a book called Reweaving What Matters about how individuals, organizations, and communities navigate change. The framework is simple: when something is coming apart — when the old way stops working — you have to do two kinds of work. First, the unweaving: you notice what’s breaking, clarify what’s actually happening, and act with incremental solutions to address your system. Then, the reweaving: you envision what you want instead, build structures to support it, and weave something new.

Mythos is an unweaving moment. The old assumptions about cybersecurity — that finding exploits requires rare human expertise, that software flaws can be patched faster than they’re found, that the complexity of our systems provides some measure of protection — those threads are pulled. The actions are not incremental. They aren’t coming back.

The question that matters now is the reweaving question: Who decides how this technology gets integrated? At what pace? With what values? With whose voices at the table? And what if a company that does not care releases this Kraken with no moral compass?

Right now, about 40 corporations are answering that question. The rest of us — the people whose infrastructure, data, and daily lives depend on the outcome — are spectators.

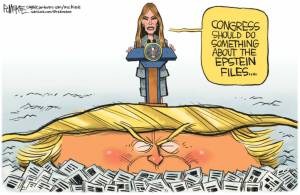

The Governance Gap

Here’s where I have to be blunt, because I think transparency matters. And the world is filled with chaos and uncertainty and these conversations need to take place.

We are living through a moment that requires serious, competent, engaged governance — at the local, state, and national level. The people making decisions about AI policy need to understand what they’re governing. They need to be in the briefings. They need to ask hard questions and build frameworks for oversight.

That is not what is happening. Not at the scale or speed this moment demands. The gap has become a chasm. Think Grand Canyon chasm.

I am working with Civic Genius on the upcoming Snohomish Civic Assembly — 40 randomly selected residents of our county who are considering and creating AI policy recommendations for local government. This is ordinary people doing the work of self-governance on one of the most complex issues of our time. They are showing up. They are learning. They are wrestling with exactly these questions.

We need this kind of work at every level. We need it desperately. Because the technology will not wait for us to get ready.

What You Can Do

I don’t have a tidy bow for this. I benefit from AI regularly and see it making huge medical advances. I am aware of the pros and cons with our children, brains, workplaces and environment. And this week it scared me. Many things are true, and I think honest ambivalence is the only credible position right now.

What I can tell you is this: pay attention. This is not a story that belongs to Silicon Valley. This is a story that belongs to every community that depends on functioning infrastructure, trustworthy institutions, and the ability of ordinary people to have a say in how their world is shaped.

The unweaving is happening whether we participate or not. The reweaving requires us.

Kathy Solberg leads a consulting business, CommonUnity. Learn more at www.commonunity-us.com.